Audio Unit ElementsĪn audio unit element is a programmatic context that is nested within a scope. This version of Audio Unit Programming Guide does not discuss group scope or part scope.

Part scope: A context specific to managing the various voices of multitimbral instrument units

Group scope: A context specific to the rendering of musical notes in instrument units There are two additional audio unit scopes, intended for instrument units, defined in AudioUnitProperties.h: Host applications can also query the global scope of an audio unit to get these values.

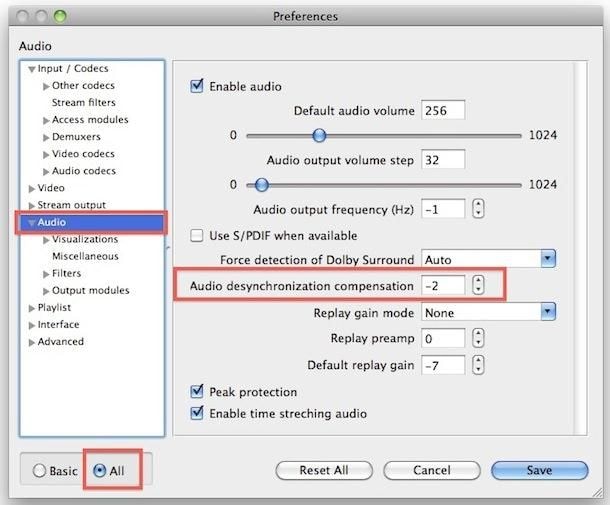

#MAC AUDIO INPUT FREQUENCY CODE#

Code within an audio unit addresses its own global scope for setting or getting the values of such properties as: Global scope: The context for audio unit characteristics that apply to the audio unit as a whole. The output scope is used for most of the same things as input scope: connections, defining additional output elements, setting an output audio data stream format, and setting output levels in the case of a mixer unit with multiple outputs.Ī host application, or a downstream audio unit in an audio processing graph, also addresses the output scope when invoking rendering. Output scope: The context for audio data leaving an audio unit. Host applications also use the input scope when registering a render callback, as described in Render Callback Connections. Code in an audio unit, a host application, or an audio unit view can address an audio unit’s input scope for such things as the following:Īn audio unit defining additional input elementsĪn audio unit or a host setting an input audio data stream formatĪn audio unit view setting the various input levels on a mixer audio unitĪ host application connecting audio units into an audio processing graph Input scope: The context for audio data coming into an audio unit. Listing 2-1 Using “scope” in the GetProperty method For example, Listing 2-1 shows an implementation of a standard GetProperty method, as used in the effect unit you build in Tutorial: Building a Simple Effect Unit with a Generic View: You use scopes when writing code that sets or retrieves values of parameters and properties. Unlike the general computer science notion of scopes, however, audio unit scopes cannot be nested. Figure 2-1 Audio unit architecture for an effect unit Audio Unit ScopesĪn audio unit scope is a programmatic context. For discussion on the section marked DSP in the figure, representing the audio processing code in an effect unit, see Synthesis, Processing, and Data Format Conversion Code. This section describes each of these parts in turn. Figure 2-1 illustrates these parts as they exist in a typical effect unit. The internal architecture of an audio unit consists of scopes, elements, connections, and channels, all of which serve the audio processing code. You also learn about the steps you take when you create an audio unit. In this chapter you learn about the architecture and programmatic elements of an audio unit. You can optionally add a custom user interface, or view, as described in the next chapter, The Audio Unit View. This part exists within the MacOS folder inside the audio unit bundle as shown in Figure 1-2. When you develop an audio unit, you begin with the part that performs the audio work.